I’ve been learning a lot on Kubernetes these last couple months, and wanted to get my homelab setup to a point to makes deploying clusters easy and reliable. When I first started, I simply used minikube and docker on my desktop. However, the need for something more “robust” and “enterprise” quickly arose.

Being relatively new to Kubernetes (k8s), I first needed information. I’m in the middle of Kubernetes Fundamentals (LFS285) from LinuxFoundation.org. Additionally, I was lucky enough to have Nigel Poulton send a copy of The Kubernetes Book ( Klingon Edition - seriously!) for finding the hidden Klingon in the “code” in the background of the book cover. These 2 resources will keep me busy for the rest of this year, as well as many blogs on various Linux, docker, and kubernetes steps I’ve found along the way.

The Architecture

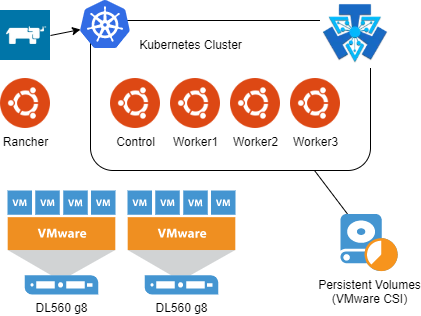

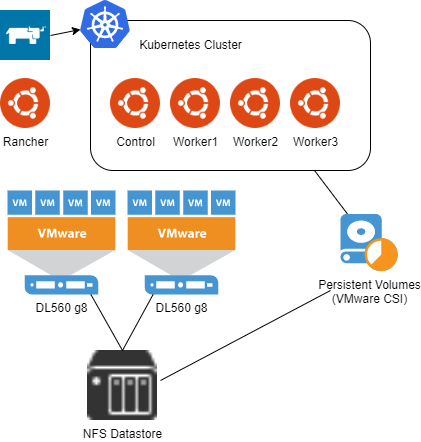

Originally, I thought about using Raspberry Pi’s for my k8s lab, but realized that I didn’t want to be on ARM cpu. Since I already had a VMware homelab with plenty of space, using it made the most sense. I started by deploying 4 Ubuntu VMs and then using NFS storage for persistent storage in the cluster I created. It worked fine, but my NFS storage (Synology) didn’t support the ability to snapshot my persistent volumes.

Next, I used the same Ubuntu cluster, added a second disk to each VM, and deployed Ceph for my cluster’s persistent storage. Unfortunately, I kept running into issues and in the end, abandoned that approach.

Since I had just upgraded my VMware lab to vSphere 7, I decided to use the vSphere-CSI to provide persistent volumes to my cluster. Again, out of my depth, I found it hard to navigate getting the proper taints on my existing cluster, getting the VMware CPI installed, and getting the VMware CSI installed and setup within my cluster. This led to the final solution:

Rancher.

I deployed a single Ubuntu VM, and installed Rancher on it. Then, within rancher I setup a Node Template for vSphere using my Ubuntu Template. Rancher includes a VMware CPI & CSI app in the catalog to make deploying new clusters with all the requirements for consuming VMware storage very simple.

Installing Rancher

I wanted to have Rancher exist outside any clusters I would make, so using a single Ubuntu VM made sense. And since Rancher runs in docker, it also made deployment super easy. I simply followed Rancher’s single node Docker deployment instructions .

First, I Installed Ubuntu 18.04 LTS on a VM with 2vCPU and 4gb RAM and got it up to date:

sudo apt-get update && sudo apt-get upgrade

Next, was installing Docker. Rancher provides a simple script, so I decided to save a little typing and use that.

curl https://releases.rancher.com/install-docker/19.03.sh | sh

Last, was using a simple docker run command to get Rancher going:

docker run -d --restart=unless-stopped \

-p 80:80 -p 443:443 \

--privileged \

rancher/rancher:latest

That was simple! At this point, All I have is Rancher running in Docker on an Ubuntu VM. Now, in order to get clusters dynamically provisioned, I would need to setup an Ubuntu Template that I can deploy more VMs from.

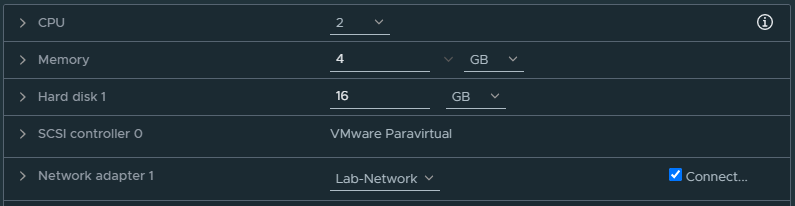

Ubuntu Template

I decided to stick with Ubuntu 18.04 LTS, so I spun up a new VM with 2vCPU, 4gb RAM, and 16gb of disk. I setup the storage controller to be VMware Paravirtual since I will be using the vSphere CSI for storage inside my clusters.

I made sure to enable SSH during the install, and then once the server was ready, I logged in and ran updates.

sudo apt-get update && sudo apt-get upgrade

Next, there are just a couple things we will need to do for Kubernetes to run properly on this. First is disabling swap and making sure it stays that way after a reboot.

sudo swapoff -a

sudo sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

Next, since I will be using DHCP in my environment instead of static IPs, I need to make sure that the Ubuntu VMs get unique addresses. Ubuntu doesn’t use the MAC address, but rather a machine id, and that’s generated during install. By running the following code, we can ensure a unique code every time we deploy from the template.

echo "" | sudo tee /etc/machine-id >/dev/null

Finally, Rancher will use cloud-init to configure the VMs it deploys from out template, so we will want to install that as well.

sudo apt-get install cloud-init

At this point, I don’t want to reboot the server anymore, otherwise I’ll lose it’s “clean” state. I shut down the Ubuntu server, and right clicked and converted it to a template.

What’s next?

In the next blog post , I’ll setup a Node Template inside Rancher as well as add a Catalog App to make the VMware storage integration incredibly simple!