With the release of vSphere 5, comes VMware’s VSA (Virtual Storage Appliance).

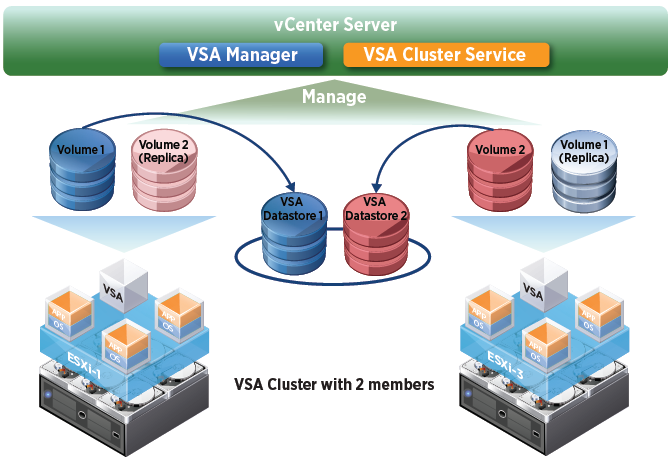

What exactly is it? Well, it’s VMware’s answer of providing shared storage to SMBs that don’t purchase a physical SAN or NAS. It will use the local storage in each ESX box, and present it to the VSA VM, and that in turn will present it back to the ESX servers as shared storage. Each ESX server will have a VSA VM running, and each will contain a replica of the other’s storage.

In order to be supported, VMware requires that your local storage be configured as Raid 10. Then, the VSAs are in a Raid 1 for HA purposes. What does this mean? It means you have lost a lot of storage! Imagine you have 4 - 300GB drives in each ESX server (ignore formatted capacity). Each server with a Raid 10 will have 600GB of space (50%). Now, since each VSA reserves 50% of the storage for the other VSA’s replica, we are left with 300GB of useable space on each physical host. That’s 25% of our original storage capacity (300gb x 4 = 1,200GB).

In the event that one ESX host dies, the other VSA will take over and present both datastores. HA will restart your VMs on the second ESX host, and all will be well.

So, you’ve decided you can live with using only 25%. Then lets move on!

Requirements:

- vSphere Essentials Plus, Standard, Enterprise, or Enterprise Plus

- Physical vCenter Server

- 2 - 3 ESX hosts (Fresh installs only)

- 4 NICs each

- Local Storage in Raid 10

- NO configuration changes

When we install the VSA manager on the vCenter server, it will automatically setup our cluster, HA, DRS, and configure our vSwitches and assign physical NICs (2 each) to them.

For my lab, I have:

- A physical vCenter server running on 2008 R2. vCenter is already installed.

- ESX1 - Fresh install of ESXi 5 with 320GB of local storage

- ESX2 - Fresh install of ESXi 5 with 320GB of local storage

I will have the other posts soon:

Part 2 - Installation and Configuration

Part 3 - Management and Failover