While attending VMworld 2016 in Las Vegas this year, I was invited to sit on the Tech Field Day panel for Monday. One of the companies presenting was Docker.

Mike Coleman from Docker presented to the panel and did a great job. While I am familiar with containers conceptually, I have not yet implemented them in a lab or production environment. And, being from the Ops side of DevOps, I was in no rush to jump onto the container bandwagon. However, That’s about to change.

Mike Coleman from Docker presented to the panel and did a great job. While I am familiar with containers conceptually, I have not yet implemented them in a lab or production environment. And, being from the Ops side of DevOps, I was in no rush to jump onto the container bandwagon. However, That’s about to change.

Like most Ops people, the idea of containers didn’t thrill me – as they don’t make Ops jobs easier – in fact in seemed like the opposite. Developers surely could see the benefits of being able to update and scale applications quickly. But, doesn’t Ops exist to support the developers and applications for the business?

So what are containers? Well, from Mike did a great job explaining. Containers are not VMs. They run on top of an operating system, and utilize resources in the OS, while containing (hint!) all of the applications code separately. I enjoyed Mike analogy:

Virtual Machines are like houses. Each house is built with it’s own electrical system, plumbing, heating & cooling, etc. Containers are like apartments. While each apartment has electric service, plumbing, and heating and cooling – they are all tied into the building’s larger system. So, an apartment can’t exist outside of the building and it’s resources, but makes use of them.

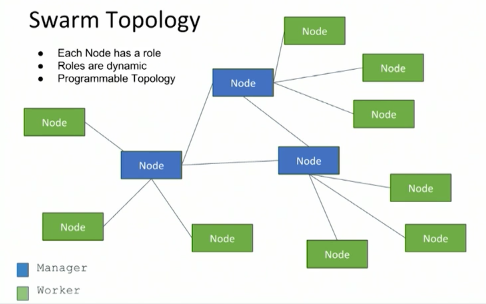

Mike also talked about Docker Swarm. Swarm handles clustering, scheduling, and orchestration. While it existed before, with 1.12, Swarm is no longer a stand-alone component but is now integrated into Docker Engine. Not being familiar with Docker, this was slightly above my head at first – but as Mike talked more, and did a live demonstration of it’s capabilities, I was starting to understand how powerful this is for the container ecosystem.

There was certainly a lot to cover, and I recommend that you watch the presentation videos from Docker below:

Pingback: Docker - Tech Field Day Extra @ VMworld 2016 - Tech Field Day